As the title indicates, today I finished up the other shaders in per-pixel mode…

Still need to port them over to OpenGL (I’ve done them on Direct3D because I still don’t trust the OpenGL renderer that much), but that should be a couple of hours…

Now listening to “Sirenian Shores” by “Sirenia”

Link of the Day: It never seems to amaze me the kind of stuff people can do on Minecraft:

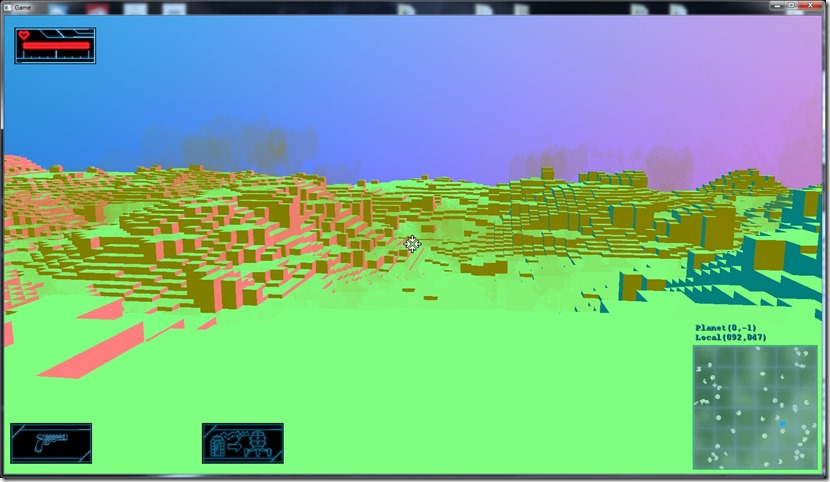

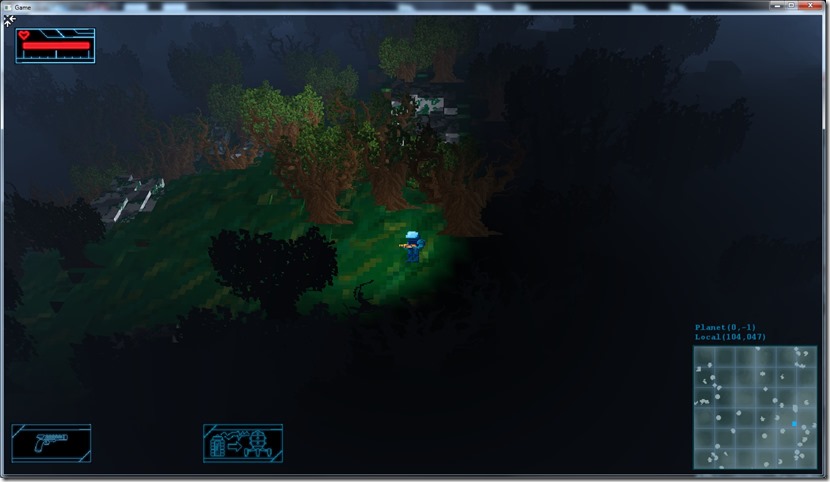

First draft of the per-pixel lighting on “Gateway”… It’s a bit hard to see the effect, because the vertex density is quite high normally.

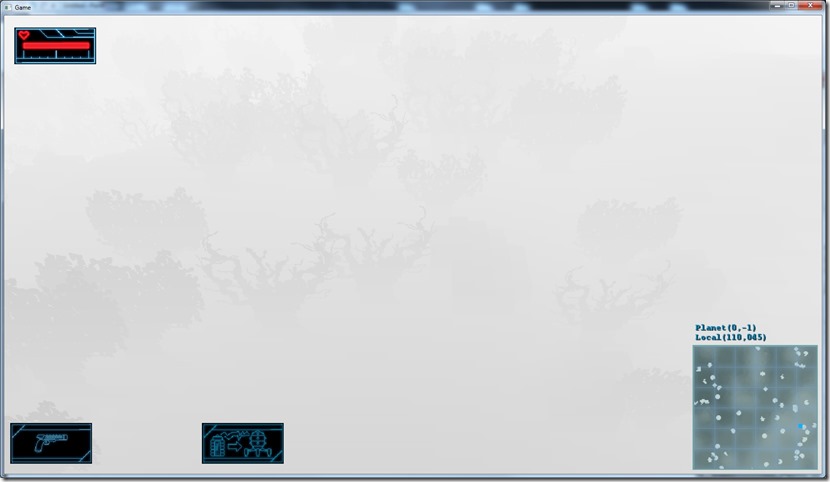

Per vertex:

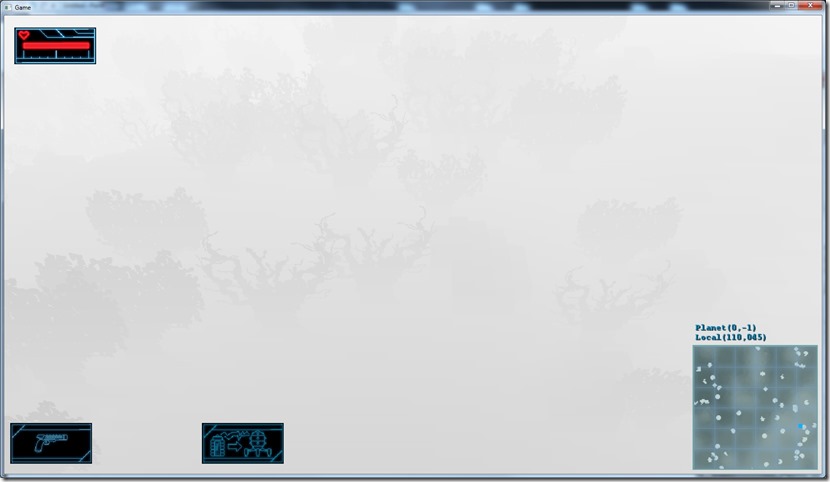

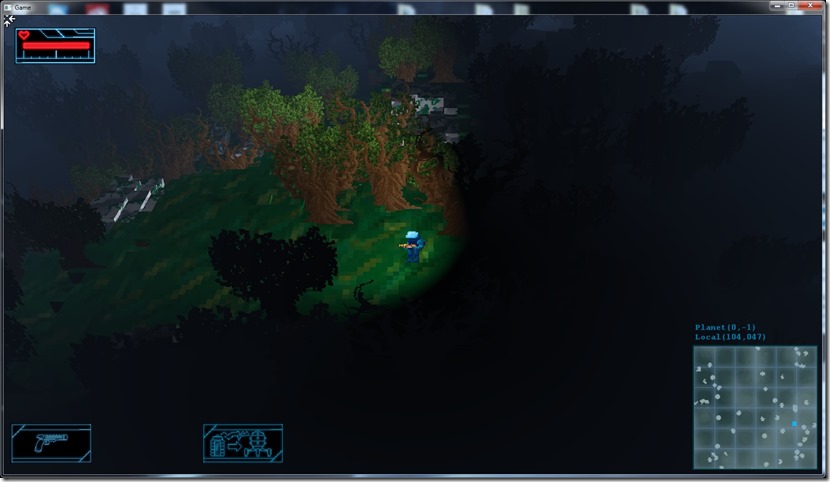

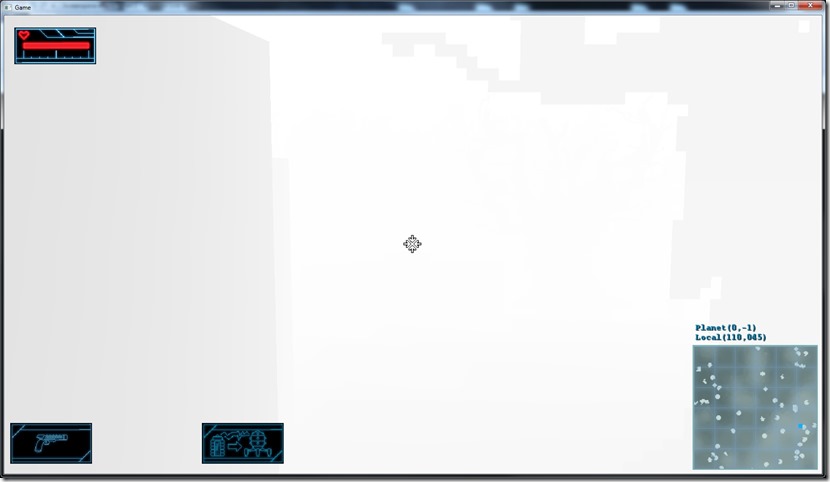

Per pixel:

You can notice it especially on the edge of light behind the player…

Still missing the per-pixel lighting on the other shaders of the game, but it shouldn’t take long…

So now I have an expensive way of doing what I was doing before!  But it opens a lot more possibilities (the desired ambient occlusion, shadows and glows)…

But it opens a lot more possibilities (the desired ambient occlusion, shadows and glows)…

Now listening to “Universal Migrator, Part 1” by “Ayreon”

On my rendering system, I’m using something I call a “render environment” to compile the right version of the shader. This has data like how many lights are active, what types, what components are enabled on the mesh (normal, how many texture coordinates), what render pass are we doing, etc.

This is stored on a structure that can easily be converted to a number for fast comparisons on a hash map:

struct Light

{

unsigned char _type:2;

};

struct Props

{

// View (2 bits)

unsigned char _per_pixel:1;

unsigned char _fog_linear:1;

// Light (20 bits)

unsigned char _light_enable:1;

unsigned char _light_count:3;

Light _lights[MAX_LIGHT];

// Material (9 bits)

unsigned char _map[MAX_TEX/8];

unsigned char _alpha_test_enabled:1;

// Mesh (13 bits)

unsigned char _normal:1;

unsigned char _psize:1;

unsigned char _color0:1;

unsigned char _color1:1;

unsigned char _tex_coord_count;

unsigned char _reserved:1;

// Other

unsigned char _pass:4;

unsigned char _reserved_other[2];

};

I use bitfields so that the structure becomes smaller. So, on the example above, the structure should fill neatly 64 bits (8 bytes), so I could just use a 64-bit number to encode this.

The problem with this is that alignment kicks in. For example, the _lights array should only have 16 bits (MAX_LIGHTS is 8, times 2 bits of the type), but each element of the array occupies a byte, so that structure will really occupy 8 bytes instead of 2.

Ok, I can work that out and use a 16-bit number to store and take care of the types myself:

struct Props

{

// View (2 bits)

unsigned char _per_pixel:1;

unsigned char _fog_linear:1;

// Light (20 bits)

unsigned char _light_enable:1;

unsigned char _light_count:3;

unsigned short _light_type;

// Material (9 bits)

unsigned char _map[8/8];

unsigned char _alpha_test_enabled:1;

// Mesh (13 bits)

unsigned char _normal:1;

unsigned char _psize:1;

unsigned char _color0:1;

unsigned char _color1:1;

unsigned char _tex_coord_count;

unsigned char _reserved:1;

// Other

unsigned char _pass:4;

unsigned char _reserved_other[2];

};

Still, this structure has 10 bytes instead of 8, because of alignment issues. So, we have 6 bits before the array, so there’s 2 bits unused before the array… So the next changes to fit in the bits will cause the system to structure to look chaotic, instead of organized by function. From a compiler perspective, it’s the same, but for me it’s a bit more desorganized…

Still, there’s really no way to go around this, so the structure becomes:

struct Props

{

unsigned char _per_pixel:1;

unsigned char _fog_linear:1;

unsigned char _light_enable:1;

unsigned char _light_count:3;

unsigned char _alpha_test_enabled:1;

unsigned char _normal:1;

unsigned short _light_type;

unsigned char _map[8/8];

unsigned char _psize:1;

unsigned char _color0:1;

unsigned char _color1:1;

unsigned char _reserved:1;

unsigned char _pass:4;

unsigned char _tex_coord_count;

// Other

unsigned char _reserved_other[2];

};

So this should fix it, right?

Not yet… There’s more alignment issues involved, even if there are no “gaps” in the memory layout…

The 16-bit “_light_type” field wants to be 16-bit aligned (due to compiler optimizations, etc), so it will waste another byte there and throw the rest of the alignments out of whack, so I have to organize the code like this:

struct Props

{

unsigned char _per_pixel:1;

unsigned char _fog_linear:1;

unsigned char _light_enable:1;

unsigned char _light_count:3;

unsigned char _alpha_test_enabled:1;

unsigned char _normal:1;

unsigned char _map[8/8];

unsigned short _light_type;

unsigned char _psize:1;

unsigned char _color0:1;

unsigned char _color1:1;

unsigned char _reserved:1;

unsigned char _pass:4;

unsigned char _tex_coord_count;

unsigned char _reserved_other[2];

};

And this has 8 bytes, even if I’m not storing anymore data… And I’ll have to look into this again when I port the game to Mac and Linux, since their compilers might have different rules…

This is one of the cases that building portable code is a bit hard, when you’re really interested in optimization…

The interesting part is that this error was in for ages in the system, and basically the rendering worked by chance!

Now listening to “Rethroned” by “Northern Kings”

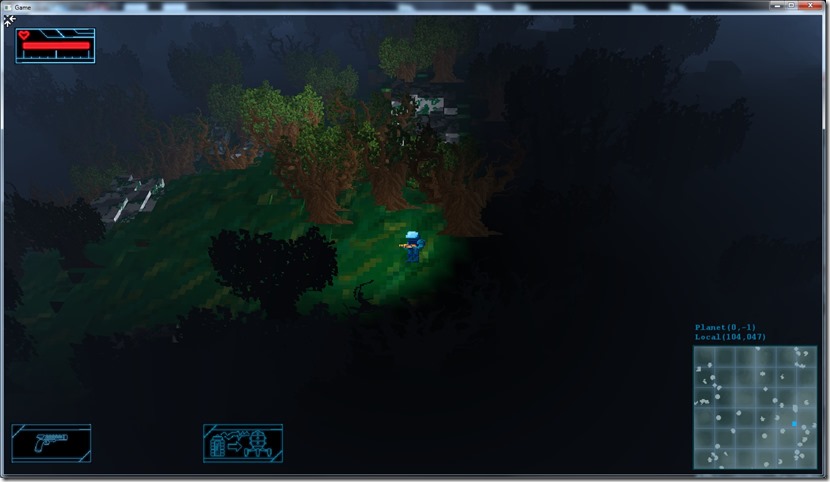

As I mentioned yesterday, because of the way I’m doing the alpha-testing, if I want to place the depth on the alpha channel of the render target that I’m using for the screenspace effects, I need a way to keep the alpha testing, but don’t use the end result of the rendering for it…

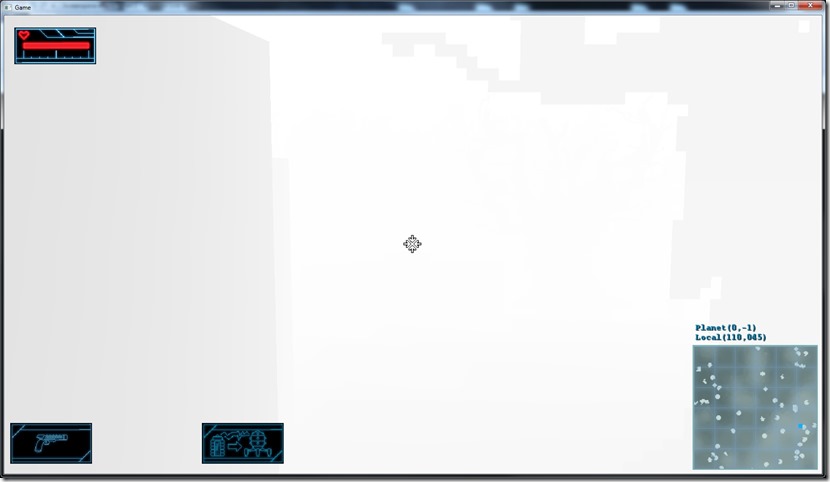

If I just plug it in, I get this:

The results get all wrong because I do alpha test using the depth of the pixel, and not its alpha value.

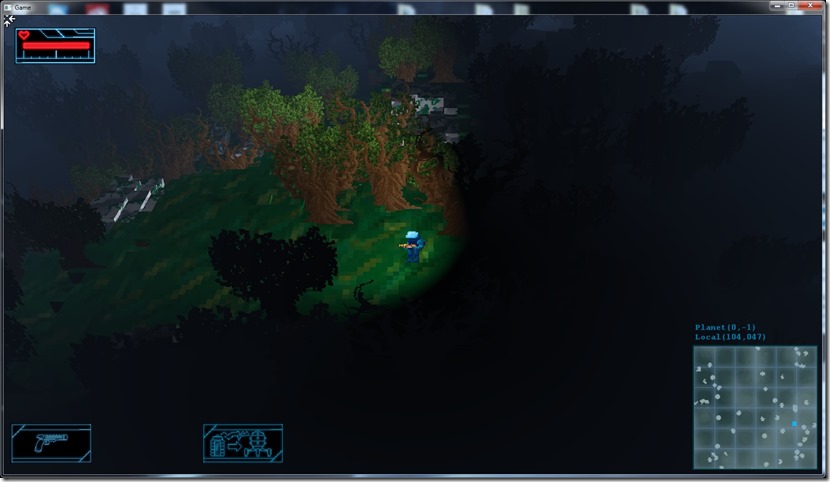

Well, shaders to the rescue… After some work today, the system now does the alpha testing on the shader itself, instead of using the “normal” way:

The trees are showing up with the distance because I’m using the depth as the alpha on the rendering, but you can see the contours of the trees correctly instead of just quads, so it’s working properly.

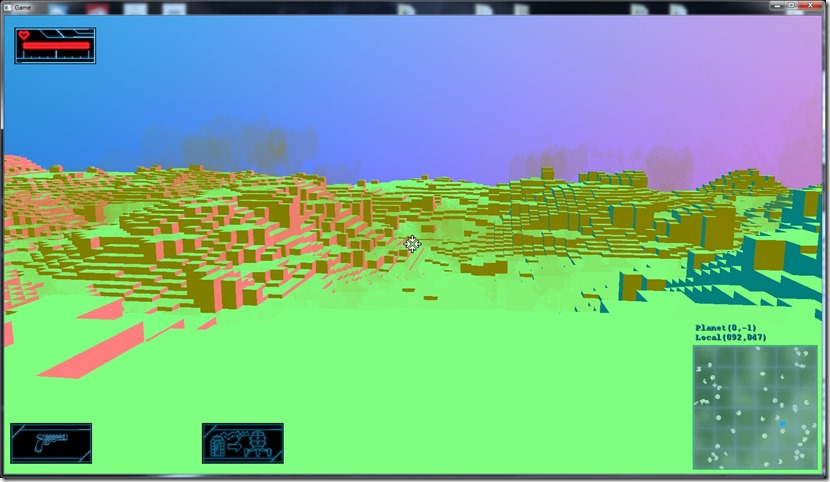

Here you can see another shot from first person perspective:

Now, I need to put the result of this render into an offscreen buffer with larger precision, instead of the screen, and I can start using this buffer as a source of data for the ambient occlusion.

Now listening to “Upon Haunted Battlefields” by “Thaurorod”

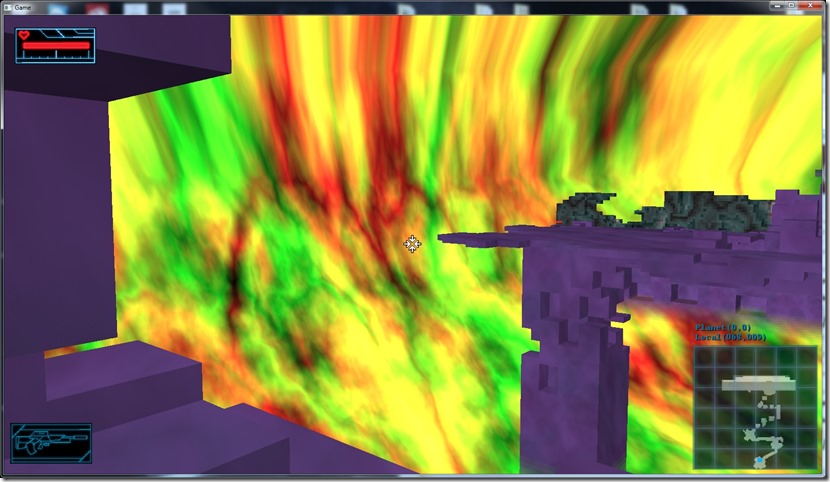

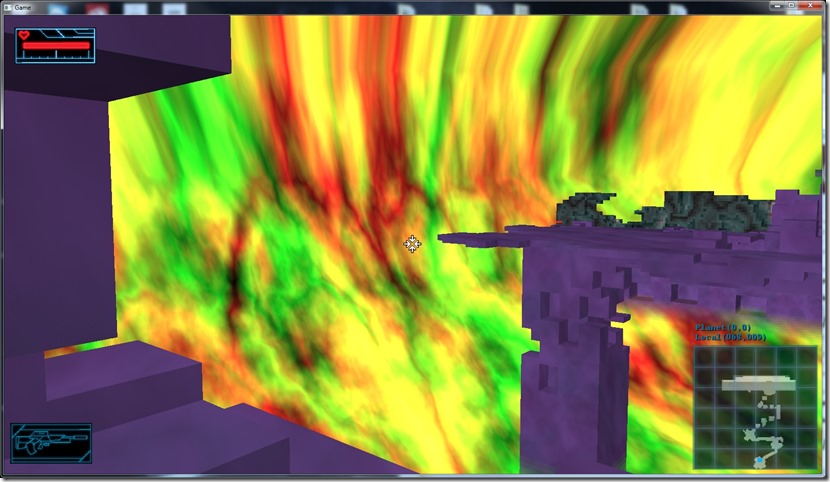

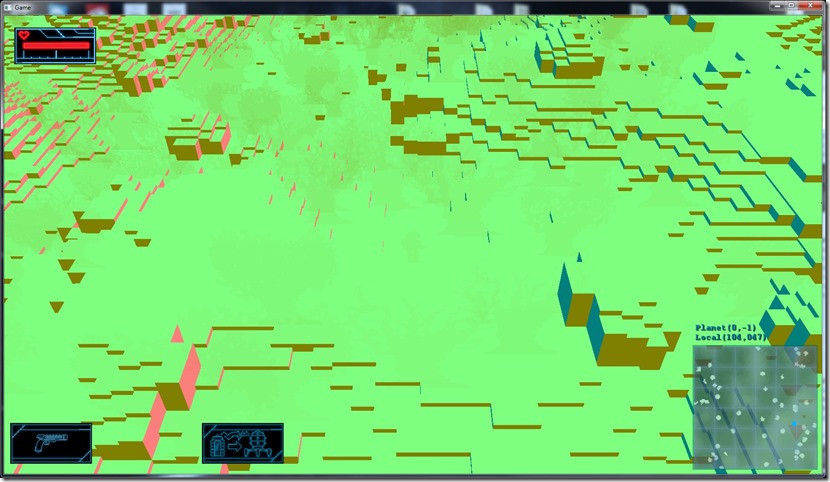

Time has been a bit tight lately (just back from holidays, so work has piled up), but today, between house chores and resting, I managed to add rendering of the normals:

Here you can see the normals mapped to colors… For example, the lime green corresponds to a normal vector of (0,1,0).

Now I want to combine both of these (depth and normal) on a single 4-channel buffer. The problem with this is that I’m using alpha testing to do the trees properly, so I need to move that alpha test from the renderer itself to the shaders, which kind of sucks, so I can alpha-test on the trees, but output the depth on the alpha channel.

This will be a bit of a handful for tomorrow… :\

Now listening to “Iron Savior” by “Iron Savior”

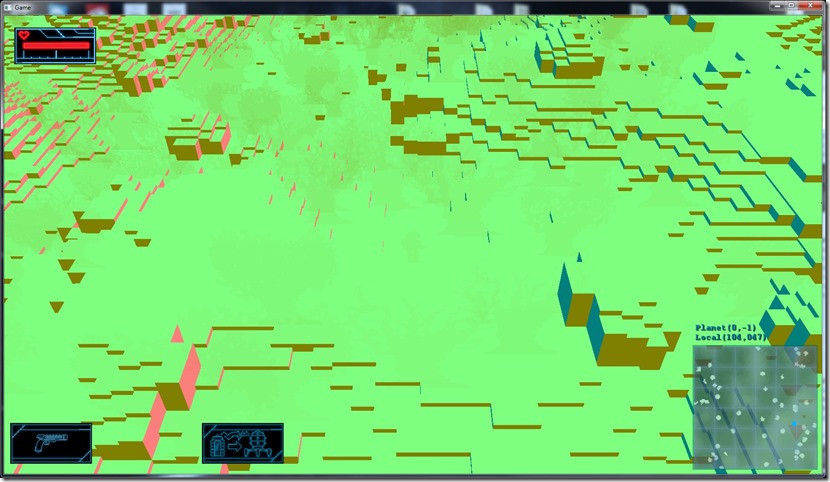

Today I started working on the screenspace effects…

Screenspace effects are effects that use only the “rendered image”, or more precisely, the 2d representation of the scene (not the whole 3d nature).

A lot of effects fall under this category (and a whole lot more are being worked on all the time)… For my purposes, I’m mainly interested in checking out ambient occlusion, glow and fast decals.

Step one to that is to add to an off-screen (invisible) buffer more information about the scene, namely the depth of each pixel, normals and emissive component, which requires me to tweak the existing render pipeline…

I started with the depth:

Black is closer to the camera, white is further… Due the way the perspective matrix works, the color resolution doesn’t really show properly without a lot of work I don’t want to have at this stage…

Here’s in first person view… Still a bit tricky to see, but the visual aspect isn’t very important, since this will be placed in a buffer for use with the shaders further down the line, not be displayed.

Now listening to “After” by “Ihsahn”

The transition between being on the ship and being on the planet was a bit abrupt, it felt disconnected in some way, so I’ve added a small cutscene that shows the ship landing on the planet…

This way the game feels more “continuous”…

Now listening to “Nifelvind” by “Finntroll”

Link of the Day: This is absolutely amazing! The stuff you can do with computers:

The AI on Gateway searches for an attack slot on the target. That’s used to stop the AIs from clustering on a single position. They request an attack slot to the target, and they keep refreshing it, until they lose it if they don’t target it anymore.

Until now, the attack slots were attributed in order, so the first enemy would always try to use the first attack slot, which in terms of position would correspond to the northeast of the enemy, even if it was coming from the southwest… This would make it LOOK stupid (even if it was a more visual thing than game impacting, since it would start to shoot as long as it had range, so it’s basically the same game-wise), so I tweaked the code to consider its position relative to the player in choosing the attack slot, which looks much better and smarter!

In the process I found a balancing issue… The gun is firing too fast, which means the enemy will not give much of a chance to the player if it doesn’t have obstacles.

I can tweak the variables (cycle time of the weapon) to adjust for this, but I think this is a very non-intuitive way to tweak the weapons, so I’m thinking of adding a new “shoot decision system” for the AIs, more not to an actual cycle, but a cooldown/fire mechanism, which will probably work much more intuitively in terms of balancing…

Now listening to “Voices of Doom” by “Mono Inc.”

Now playing “Wasteland 2”… The game is rather cool, but it has some awful flaws, mostly because of the intentions of keeping in touch with its past, like too many “skills” that are basically pointless except in a mission or another… I understand what they wanted to do with the game, but for example if you don’t take the “Brute Force” skill in one character, you won’t be able to do some parts of the main game story properly. You can say “yeah, but that’s decisions”, but it’s a false one… I can move that specific door with that skill on that mission, but I don’t have any interest in having that skill in the rest of the game (so far), so it’s asking me to chose between a super-useful skill like “machine guns” (which I use every 2 minutes) for one that might be really useless in most of the game. A system like Shadowrun’s skill traversal would be better, in my opinion (if you don’t have a skill, you can use an associated attribute… in this case, if you didn’t have the “Brute Force” skill, you’d be able to use “Strength” as a substitute, at a penalty. The end result could be the same, but I wouldn’t have the feeling the game cheated). Other than that, the atmosphere’s really great, the gameplay is nice and fluid (a bit hard, but that’s a matter of taste) and the story is interesting (bar some stupid stuff that’s done to enhance gameplay but just sounds silly).

Somewhere in the last updates (OpenGL and even before), I spoiled the explosion effect:

Fixing it was relatively easy, but then I noticed that the explosion was missing smoke particles:

Unfortunately, adding smoke particles would require some depth sorting, or else the explosion would loose its brightness, which is not what I wanted…

After mucking around with the factors, adding more particles, etc, I decided to forego the smoke…

The explosion is a very fast effect, the lack of smoke won’t be very apparent unless you’re looking for it… The brightness is more important (we associate brightness with energy and an explosion is all about the energy).

I might revisit this later, if I start missing it very much, but to be honest I don’t believe that will be an actual problem except for me… Order-Independent Transparency would help here, but I can’t do that with the DX9 renderer (might be able to do it with the OpenGL one, though), but it’s cost would narrow the target GPUs…

The explosions look pretty good already, it’s the best explosions I’ve ever made!

Anyway, tweaking effect parameters is a drag, the next step on my game development efforts in the future (after this game is done) is to make a engine-independent effect system with an associated editor (so I can use it for both Cantrip and Spellbook)… It will be a lot of work, but it will pay itself after 10 or 20 effects, and it will improve the effect quality noticeable, I think…

Now listening to “Reflection” by “Paradise Lost”

Still working on the game polish, focusing on the weapon effects, mostly replacing the old “line drawing” effect for a better effect, using actual quad rendering and pre-multiplied alpha:

Looks much better than the old version (of which I can’t find any screenshots  ).

).

Polish phase is fun but very time consuming!

Now listening to “To The Metal” by “Gamma Ray”

Now quit playing “Lords of the Fallen”… I got this one because it was an easier version of “Dark Souls”, but I still don’t understand the pleasure of getting my butt kicked over and over and over again… The “experience loss” mechanic in particular is very annoying. I like challenges, but the type of challenges on both Dark Souls and Lords of the Fallen aren’t fun for me. I’d rather spend weeks on a World of Warcraft boss than 2 hours on a Lords of a Fallen boss, especially because the player movement is so slow (yeah, real armor would be very heavy, etc, but it’s annoying nevertheless).